When people first try an AI Music Generator, they often expect a shortcut and end up disappointed by randomness. The real challenge is not whether a system can make sound. It is whether a system can take a half-formed musical intention—mood, pace, texture, vocal energy, lyrical direction—and turn it into something that feels organized enough to build on. What makes the experience interesting is not instant perfection, but the way a rough idea can become a more coherent draft in minutes.

That shift matters because music creation often stalls before production even begins. A person may know the emotional tone they want, but not the chord choices, arrangement logic, or vocal phrasing that would help them move forward. In my observation, tools become useful when they reduce that gap between instinct and output without pretending that creativity has become automatic. ToMusic is most understandable from that angle: not as a magic replacement for musicianship, but as a system for translating intent into a song-shaped result.

Why Text Inputs Matter More Than Buttons

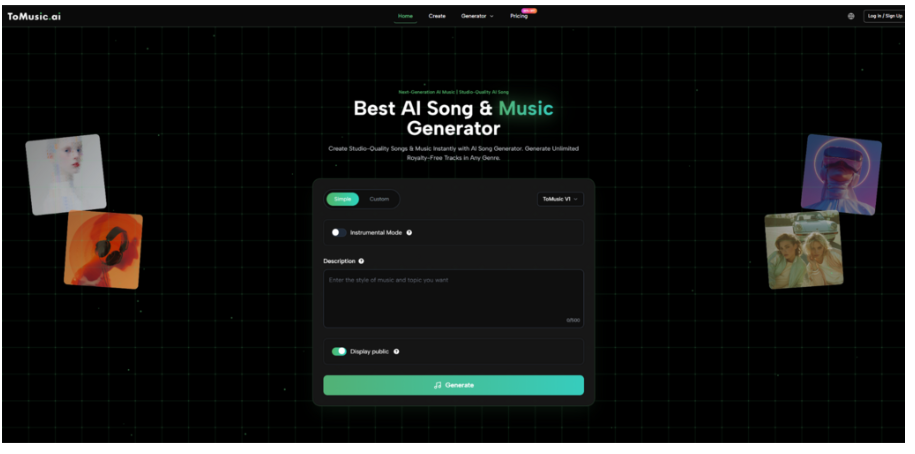

Most music tools become easier to evaluate when you stop asking whether they are “smart” and start asking what kind of input they reward. ToMusic is built around language-based direction. You can enter a descriptive prompt, or you can begin with lyrics and let the system build music around them. That sounds simple, but it changes the whole user experience.

Instead of beginning with a dense production interface, the process begins with meaning. You describe style, mood, pacing, instruments, or vocal characteristics. The system then interprets that input and maps it onto a musical output. In practice, that makes the platform approachable for people who think in references and emotions rather than theory terms.

Why Description Becomes Creative Control

The more clearly you describe a track, the more likely the result will feel intentional rather than generic. A vague prompt may still generate a usable draft, but a more structured description usually gives the music a stronger identity. This is one reason text-first generation has become appealing to creators who work fast.

Why Lyrics Change The Starting Point

When a user begins with words instead of mood tags, the project starts from narrative rather than atmosphere. That tends to create a different creative rhythm. Lyrics imply phrasing, section length, emphasis, and sometimes even the emotional contour of the performance. The platform’s lyrics-based route therefore feels less like “background music generation” and more like early-stage song construction.

How The System Seems To Read A Song Brief

The platform presents itself as understanding key musical descriptors such as genre, mood, tempo, instrumentation, and voice characteristics. That gives a useful clue about how the workflow is meant to be used. It is not just looking for keywords. It is trying to convert a short creative brief into a combination of musical decisions.

From Mood To Arrangement Choices

If you ask for something cinematic, intimate, upbeat, or melancholic, you are not only describing feeling. You are also pushing the system toward different rhythm density, instrumental weight, and melodic behavior. In my testing of similar systems, this layer matters more than many users expect.

From Tempo To Perceived Energy

Tempo often changes how listeners judge confidence, urgency, and emotional distance. A prompt with a clear pace target usually produces more stable results than one that only names a genre. That is especially true when users want music for video intros, short-form content, or lyrical demos.

From Voice Style To Song Identity

Vocal direction changes whether a track feels polished, experimental, soft, dramatic, or commercially oriented. Even when the output is not perfect, vocal framing often determines whether a song feels memorable enough to revisit.

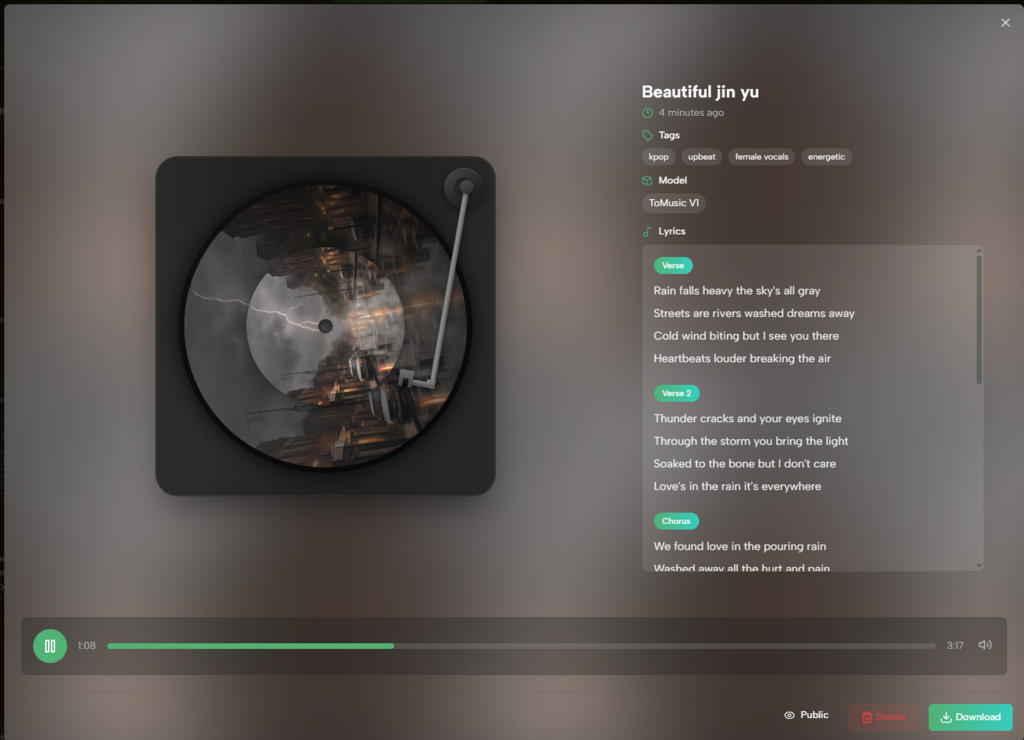

What The Four Models Suggest About Product Design

One of the more useful aspects of ToMusic is that it does not present everything as one black box. The platform offers V1, V2, V3, and V4, each with different positioning. That alone tells users something important: generation quality is not one-dimensional.

| Model Focus | Practical Reading | Song Length |

| V1 | Faster entry and lighter generation | Up to 4 minutes |

| V2 | Expanded atmosphere and layered sound | Up to 8 minutes |

| V3 | Richer harmony and more complex arrangement | Up to 8 minutes |

| V4 | More realistic vocals and stronger control | Up to 8 minutes |

This structure encourages comparison rather than blind trust. Instead of assuming one model suits every project, users can match the model to the job. That is a healthier expectation than believing one output engine should cover every musical need equally well.

A Realistic ToMusic Workflow In Three Steps

The official flow is fairly direct, which is part of the appeal. It does not bury the main action under too many menus.

Step 1. Choose A Model And Input Method

The first decision is whether to begin with a prompt or with lyrics, and then which model to use. That single choice already influences whether the session feels exploratory or goal-driven.

Step 2. Describe Style, Mood, And Structure

Users then provide the creative brief. This can include genre, emotional tone, tempo, instrumentation, or vocal direction. At this stage, clarity matters more than length.

Step 3. Generate, Review, And Compare Versions

Once the track is generated, the result can be reviewed, saved, and compared with alternate outputs. In many cases, improvement comes from variation rather than from expecting the first result to solve everything.

Why A Lyrics-First Creator Might See It Differently

A writer who already has verses and hooks is not looking for the same thing as a content creator who just needs mood music. That is where Lyrics to Music AI becomes a more useful framing than a generic “make me a song” label. The lyrics-first route changes the platform from a sound generator into a composition partner for early drafts.

For lyric writers, the benefit is not just speed. It is hearing what words feel like when phrased, paced, and placed inside a musical container. A lyric on a blank page can seem complete until melody reveals awkward repetition, weak section contrast, or a chorus that lacks lift. In that sense, generation becomes feedback.

Where The Platform Looks Most Useful

Not every user wants the same outcome, and that is why the platform makes more sense when tied to use cases.

Demo Writing For Song Concepts

People with unfinished lyrical ideas can test emotional direction before investing in full production.

Fast Sound Sketches For Content Creators

Short-form video makers often need music that expresses a mood quickly, even if it is not meant to be a final commercial release.

Idea Expansion For Non-Producers

Writers, marketers, and founders may have a strong creative concept but no production background. A text-first workflow lowers the barrier for them.

What Limits Still Matter In Practice

Tools like this become more believable when their limitations are stated plainly. Results still depend heavily on prompt quality. Songs may need multiple attempts before they feel aligned with the intended mood. And even when a track sounds impressive on first listen, creators may still want to revise lyrics, pacing, or arrangement in later stages.

Why The First Draft Is Rarely The Final One

Generated music often works best as a draft surface. It can reveal direction, but it may not settle every detail. That is not a flaw unique to this platform. It is part of the broader reality of AI-assisted creation.

Why Clear Expectations Improve Satisfaction

Users who treat the output as a starting point usually get more value than users expecting a perfect release-ready record every time. In my view, that mindset is the difference between disappointment and productive experimentation.

Why Iteration Feels Built Into The Product

The platform’s model choices, prompt-based flow, and save-and-compare logic all suggest that iteration is part of the intended experience rather than an accidental side effect.

What ToMusic Actually Changes For Creators

The most interesting thing about ToMusic is not that it generates songs from text. Many tools can now claim some version of that. The more meaningful shift is that it gives structure to the earliest, vaguest phase of music creation. It offers a bridge between “I know the feeling I want” and “I have something I can react to.”

That may sound modest, but creative work often depends on exactly that kind of bridge. When a platform helps users move from intention to a draft with identifiable form, it does more than save time. It changes how often people are willing to begin.