The transition from individual experimentation to institutionalized production is the single greatest hurdle for modern content teams. For the past eighteen months, most organizations have treated generative AI as a “black box” of creative surprises. An editor might use one model for a hero image, while a social media manager uses another for a quick background, resulting in a fractured visual identity that lacks cohesion.

Operationalizing these tools requires moving beyond the “slot machine” mentality of prompting. It demands a shift toward predictable workflows where the output of a model like Nano Banana Pro is not an end-point, but a reliable layer in a larger production stack. Achieving this level of discipline requires more than just better prompts; it requires a centralized environment that can bridge the gap between raw generation and final, brand-compliant assets.

The Consistency Problem in Distributed Teams

The primary friction point in team-based generative production is “aesthetic drift.” When multiple creators use different platforms or even different versions of the same model, the subtle weights of the neural networks produce varied results. One creator might get hyper-realistic lighting while another gets a stylized, illustrative feel. Without a standardized toolkit, the brand loses its visual signature.

Content teams are finding that the solution lies in specialized models that prioritize speed and reliability over infinite variety. This is where Nano Banana Pro enters the workflow. By narrowing the scope of the generative output and focusing on efficiency, teams can create a baseline of quality that is easier to replicate across different seats. However, even with high-performance models, the “first-shot” generation is rarely production-ready. There is almost always a need for corrective editing, which is where the workflow often breaks down.

Refining the Output with a Unified AI Image Editor

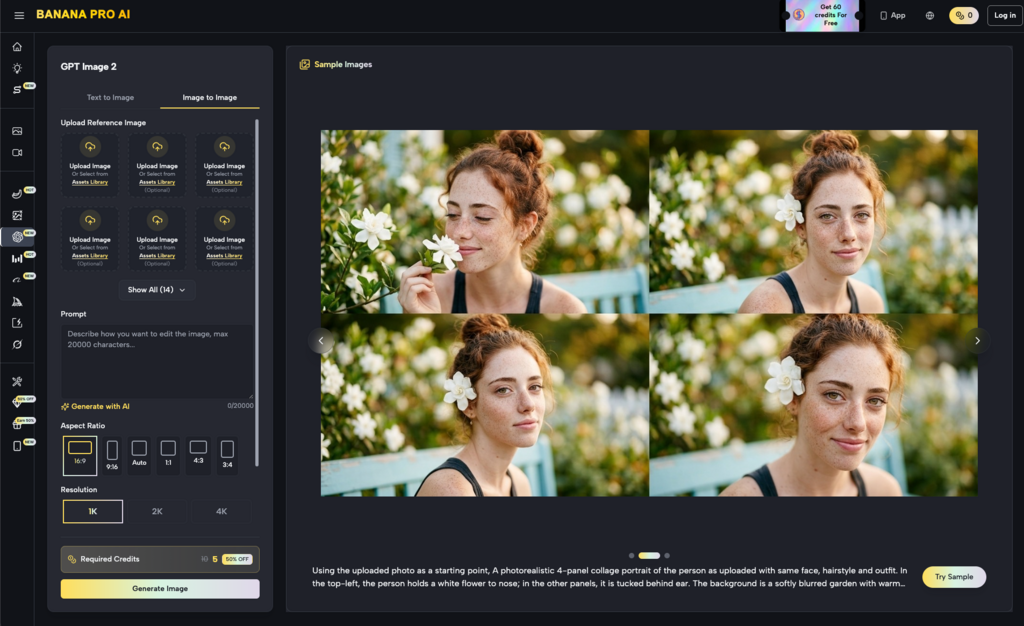

If the generative model provides the raw material, the editor provides the polish. In many teams, this involves downloading an AI-generated image and moving it into legacy software for cleanup. This context switching is a significant source of friction. It introduces file-handling overhead and separates the creative act of generation from the technical act of refinement.

Integrating an AI Image Editor directly into the generation pipeline changes the math. Instead of starting over with a new prompt when a generation is 90% perfect but has a minor flaw—like a distorted hand or a misplaced lighting source—teams can use in-painting and canvas-based tools to fix specific regions. This iterative approach is far more cost-effective than burning through credits on “re-rolls” in hopes that the model eventually gets the details right.

It is important to acknowledge, however, that these editors are not magic wands. Even with advanced tools, there is a technical ceiling to what can be fixed. If the underlying composition of a Nano Banana generation is fundamentally flawed, an editor might struggle to rectify it without significant manual over-painting. Teams must learn to recognize when to pivot rather than trying to “fix” a broken base image indefinitely.

Nano Banana: Speed as a Production Catalyst

In high-volume environments—think performance marketing or rapid-response social teams—latency is a killer. Waiting sixty seconds for a high-resolution generation is acceptable for a single cover image, but it is a bottleneck when trying to iterate on twenty different ad variations.

This is the practical utility of Nano Banana. It is designed for velocity. For content teams, this speed allows for a “fail fast” methodology. Creators can test compositions and color palettes in seconds, discarding what doesn’t work before committing to a higher-fidelity render or a more complex video workflow. This rapid prototyping phase is often overlooked but is essential for maintaining a high production floor.

The limitation here is that speed often comes at the cost of extreme detail. While Nano Banana is excellent for core concepts and medium-scale assets, it may lack the intricate textural data required for large-format print or ultra-high-definition displays. Production leads must be clear with their teams about which tool is appropriate for which output format.

Bridging the Gap Between Text and Image

One of the most difficult aspects of operationalizing Banana Pro or similar platforms is the translation of brand guidelines into prompt logic. Most brand books are written in human-centric language—terms like “innovative,” “approachable,” or “bold.” Generative models do not understand these as concepts; they understand them as statistical weights based on training data.

To standardize production, teams should develop a shared “prompt library” specifically tuned to their chosen models. If the team is using Banana AI, the library should include specific tokens that reliably trigger the brand’s desired aesthetic. This reduces the cognitive load on individual creators and ensures that whether an intern or a creative director is at the helm, the output remains within a predictable range.

We must remain cautious, however, about the “echo chamber” effect. If a team relies too heavily on a static library of prompts and a single model like Nano Banana Pro, the creative output can become stagnant. There is a delicate balance between operational consistency and the creative “happy accidents” that make generative media compelling in the first place.

Managing the Canvas Workflow

The shift from a “chat box” interface to a “canvas workflow” is perhaps the most significant evolution in creator tools. A canvas allows for spatial reasoning—placing assets, expanding backgrounds via out-painting, and layering different generations. This mirrors the traditional design environment but with the added power of generative filling.

For a content team, the canvas serves as a collaborative sandbox. A lead designer can set the layout, and a junior creator can populate the elements using Nano Banana. This hierarchical workflow is much closer to traditional agency structures than the solo-prompting model. It allows for oversight and quality control at every stage of the process.

The uncertainty here lies in file management and versioning. Unlike vector files or layered PSDs, generative canvases can be difficult to “undo” or revert once multiple AI operations have been baked into the pixels. Teams need to establish rigid save-states or duplication protocols to ensure that a bad edit doesn’t destroy an otherwise perfect asset.

Technical Evaluation: When to Move Beyond the Basics

Not every task is suited for a “nano” model. While Nano Banana Pro is a workhorse for the majority of digital content, certain edge cases—like complex typography or specific architectural accuracy—still challenge even the best generative systems.

When evaluating a platform like Banana AI, teams should run “stress tests” on their specific use cases. If your brand relies heavily on depicting specific products or recognizable human faces, you need to determine if the base model can handle the fine-motor control required, or if you will need to supplement the workflow with external LoRAs (Low-Rank Adaptations) or high-level manual retouching.

There is also the matter of spatial coherence. AI models often struggle with “gravity” and “attachment”—knowing that a hand should be gripping a handle, not just floating near it. Recognizing these failures early in the workflow is a skill that production teams must cultivate. The AI Image Editor is a powerful corrective tool, but it works best when the user understands the physics of the scene they are trying to depict.

The Human-in-the-Loop Requirement

Despite the advancements in automation, the most successful content teams are those that maintain a “human-in-the-loop” philosophy. Generative tools are force multipliers, not replacements for editorial judgment. A tool like Nano Banana Pro can generate a hundred images in the time it takes to drink a coffee, but it cannot decide which of those hundred aligns with a client’s subtle preference for “warm but not orange” lighting.

The role of the creator is shifting from “maker” to “curator and refiner.” This requires a different set of skills: an eye for compositional flaws, an understanding of how light interacts with surfaces, and the ability to steer a model through an iterative loop.

Scaling the Pipeline Without Losing the Soul

The ultimate goal of operationalizing these tools is to remove the “magic” and replace it with a process. When a team uses Banana Pro as a standardized environment, they are building a repeatable pipeline. This pipeline allows for the scaling of content volume without a linear increase in cost or headcount.

However, the industry is still in a state of flux. Model updates happen weekly, and a workflow that is optimized for Nano Banana today might need adjustment by next month. Teams must remain agile, treating their SOPs as “living documents” rather than set-in-stone rules.

Operationalizing generative media is not a one-time setup; it is a commitment to continuous refinement. By focusing on integrated tools like the AI Image Editor and high-velocity models like Nano Banana, content teams can move past the chaos of experimentation and into a new era of professional, predictable AI production. The technology is ready; the question is whether the workflows are.